From public clouds to hybrid – changes in data management

If you’ve been reading tech news sites like this one and keeping abreast of the changing scene in the industry, you’ll recall that it was only a few years ago when the talk was all about moving to ‘The Cloud’. As the price of storage and computing power dropped, the enterprise began moving its computing provision off the premises and into the public cloud.

Public clouds offered massive scalability, a centralized infrastructure, and (this aspect made the accountants happy) payment models that lowered CAPEX, as computing power could be effectively leased on the basis of everything-as-a-service (XaaS).

More recently, the hell-for-leather rush towards the public cloud has slowed, as real-life experience showed that wholescale contracting-out is either not entirely practical nor desirable and is expensive in the long term. Most large enterprises today are ending up with what has become known as multi-cloud (or hybrid cloud) provision – that is, a mixture of public and private (in-house) clouds, plus a degree of old-style in-house service (bare-metal) hosting.

Today’s distributed clouds can also encompass intelligent edge devices, remote offices and, via the internet of things (IoT), data collection & processing from devices as disparate as oil installations, wind farms and security cameras.

Why the multi-cloud?

By spreading services across mixed topologies, there are clearly security benefits. Failover in the event of a disastrous outage is one positive, plus there’s an element of bet-hedging that allows most CIOs to sleep more soundly at night.

Performance too is an issue. By deploying multiple instances of a service, these can be switched between as demand dictates, or simply chosen for their geographical closeness to a market.

Keeping a range of options open has been found to create an infrastructure that is highly responsive to changes in business policy. Given the wide range of use cases and requirements for performance, security, speed and cost ranges, the old one-size-fits-all approach no longer works effectively.

How to manage the multi-cloud

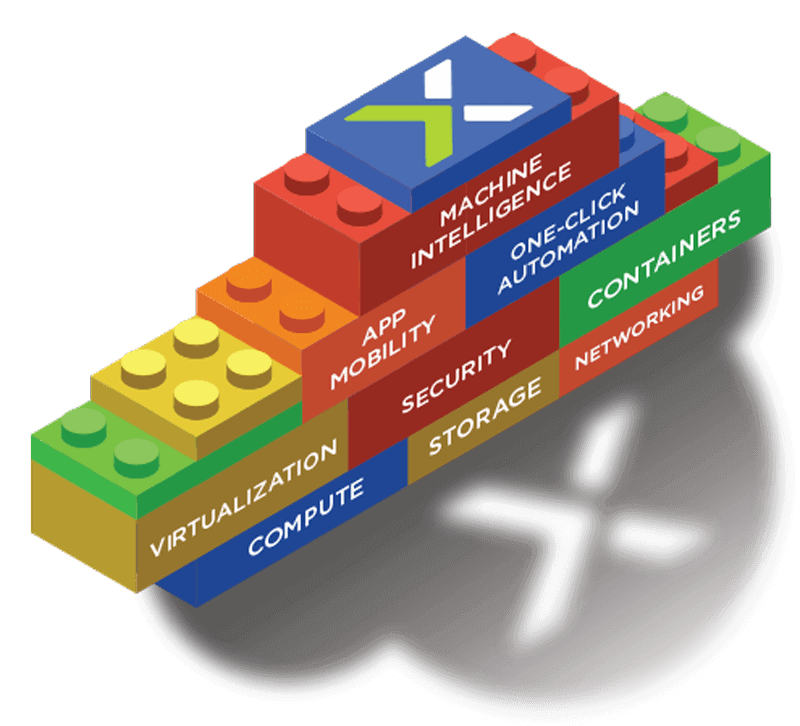

The same technologies that powered the first instances of the public cloud are now available (or rather, their descendants are) for any enterprise or IT organization through what is commonly called as hyperconverged infrastructure. Additionally, those technologies are delivered as full-stack solutions that provide all the infrastructure to support a range of workloads or applications. Hyperconvergence technologies can be deployed initially for one case scenario, and then scaled very simply.

As a prime example, the Nutanix solution unites clouds regardless of nature and geography. With central management, all uses – from a single app to multiple, complex deployments – can be handled seamlessly.

Nutanix were the first data infrastructure provider to come up with the notion of hyperconverged infrastructures as a new way of delivering datacenter solutions.

Hybrid clouds in the future

In the same way that a few years ago, the talk was all of the moves to the cloud, today, the talk is of the internet of things (IoT) and big data. Intelligent devices have the power to change our interactions with the physical world, but one aspect of the IoT is the problem of the massive amount of data that can be collated.

It is more efficient to collect and process data as locally as possible in order that it can become part of a unified data ‘lake’ with little effort. Although a simple public cloud may seem to be a cheap and obvious repository, it is not suitable for the levels of data that the IoT creates.

The answer, therefore, is the hybrid cloud: data storage and processing as necessitated by business strategy, rather than physical constraints. By building private clouds that look and operate like public cloud spaces, true hybrids between public and private can be built – clouds on ‘both sides’, if you will.

If you’d like to talk to the leader in hyperconverged infrastructures for the hybrid cloud, talk to Nutanix today.

READ MORE

- Google parent Alphabet eyes HubSpot: A potential acquisition shaping the future of CRM

- Microsoft splits Teams from Office Suite; who benefits?

- Unveiling the reality of incognito mode from Google and online privacy

- How Bonzai is disrupting the digital ad landscape with rapid innovation

- AI and elections: Google Gemini chatbot limits info; what’s their strategy?