A real-time API platform for customers who demand real-time experiences

Successful developers of new applications know there’s a great deal more to building a great “customer experience” than concentrating on those more specialized areas of UX and UI.

While that presentation layer is absolutely critical to the success of the application or service, it’s only one element. The speed, security, and a sense of solidity or reliability of the end-users’ experience are at least as important (if not more so) if the app is to hit the sweet spot for users.

If any of the disparate parts of the customer experience fall short, the democratic nature of the internet usually means that a competitor’s product is only a click or screen-tap away. That’s, of course, how disruptors in any vertical find their niche — a start-up with a digital-first mentality with a well-rounded product can easily usurp a more established company’s less-capable offering. In this app-centric world in which we live, the quality of the customer experience is what matters more than reputation, history, or provenance.

New measuring sticks

In the last few years, the production of applications that, when measured, perform quickly and are proven to be agile and scalable is also only part of the success equation. That’s because monolithic, standalone applications and services no longer exist in the way that they used to. Today’s “applications” are, in fact, an aggregation of interconnected services and components that act together to achieve a desired outcome. What we consider an application may be comprised of hundreds or even thousands of discrete components networked together.

Integration with other internal systems and with partners’ and providers’ apps and services is what creates a critical part of the finished product. In short, a lightning-fast application, with a perfect user interface that offers a unique and wildly useful service, will fail if the way it interacts with other systems — via APIs — isn’t efficient, safe, fast, and reliable.

A survey by Akamai stated that in 2018, 83 per cent of internet traffic consisted of API traffic. Large institutions presenting their services digitally may be making hundreds of thousands of API calls a second across the enterprise, and a single component of that application may be generating four or five thousand API requests a second. For end-users, whose only concern is the customer experience, a service slow-down or falter anywhere in this API chain is a failure.

Whether that stumble is caused by a poorly-coded application, a memory leak, buffer overflow, or an overwhelmed API is utterly irrelevant. Tuning API traffic, and managing individual APIs is now of critical importance to any business’s digital offerings.

Defining priorities

Long-serving IT managers will be acclimatized to the hype surrounding very new, ground-breaking products, which are often presented to them by enthusiastic staff or sales reps, and these usually come accompanied by a figure preceded by a dollar sign. But if API management is of paramount concern — and we at Tech Wire Asia consider it should be — what comes as good news is that NGINX currently provides a full stack of API management capabilities, spanning from its widely-used open-source solution to the commercially-available application delivery platforms, NGINX Controller and NGINX Plus.

In fact, 40 per cent of NGINX deployments are used to manage APIs, alongside its more common roles as a web server, proxy device or traffic shaper.

As an open-source platform supported and effectively improved and honed by a community of thousands, the NGINX API management code is, above all else, fast. In terms of metrics, the platform can route, authenticate, shape and cache API requests in less than 30ms. Producing that type of responsiveness reliably, and when demand can suddenly ramp and scale, forms a critical part of many enterprises’ offerings, which is why so many organizations out there are using NGINX in this arena. APIs might be functioning on the basis of microservice to microservice, or from a more monolithic application with multiple external parties, or more likely, both of those and everything in between.

But whatever the scale, the result is a platform on which customer experiences can be built reliably, safe in the knowledge that there will be a minimum of latency or any form of delay-producing overhead.

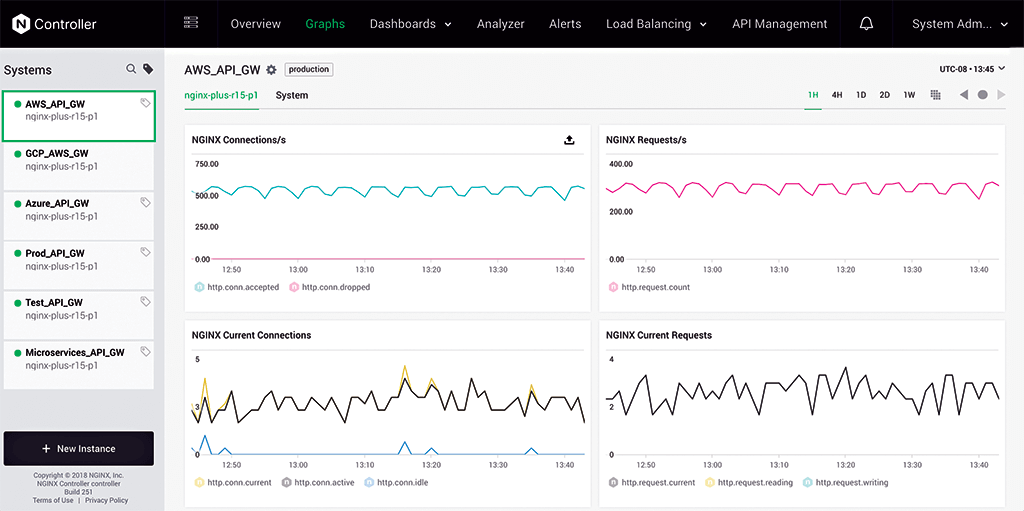

Source: NGINX

The less-than 30ms response time is, to human senses, effectively real-time. In some ways, it’s analogous to the long-standing importance of the metrics of web page render speeds, where slower sites lost viewers, as highlighted 23 years ago in this article (warning: contains references to 14.4k baud modems).

The question is, is your API in real time? You can test the latency of your API with this open-source tool rtapi – a real‑time API latency benchmarking tool created by NGINX that tests the responsiveness of your API gateways and endpoints, and generates a PDF report that you can easily distribute and socialise among your peers.

Business-criticality

With the NGINX API management platform, there are two components: NGINX Plus as the API gateway that routes and shapes API traffic, and NGINX Controller that provides the API lifecycle management (defining, publishing, monitoring, etc.). Administrators can use NGINX Controller to, amongst its other facilities, monitor and manage all aspects of API flows, internally and externally. The Controller API Management module even offers a wizard-style deployment feature which makes establishing NGINX Plus as the API gateway simple and quick or, to take it one step further, provides integration with DevOps tools to automate the process. But it’s the speed and reliability of the combined solution for processing APIs at enterprise scale that differentiates the NGINX offering.

In fact, in light of the perceived real-time response metric of less than 30ms, that’s how F5 and NGINX refer to the capability. To learn more about the Real-Time API concept, you have several choices:

– Download this eBook.

– Join the on-demand webinar.

– Start a free trial of NGINX Controller for 30 days.

READ MORE

- Ethical AI: The renewed importance of safeguarding data and customer privacy in Generative AI applications

- How Japan balances AI-driven opportunities with cybersecurity needs

- Deploying SASE: Benchmarking your approach

- Insurance everywhere all at once: the digital transformation of the APAC insurance industry

- Google parent Alphabet eyes HubSpot: A potential acquisition shaping the future of CRM