The DALL-E 3 AI image generator is now available to everyone inside Bing Chat.

DALL-E 3 AI image generator now available for everyone inside Bing

- Microsoft’s AI image generator, powered by DALL-E 3, is now available for all Bing users.

- The AI image generator also highlights the challenges of AI content moderation and user safety.

- There are discrepancies in the results achieved with the same prompt in Bing Chat and Bing Image Creator.

Microsoft has announced that the newest iteration of DALL-E 3 AI image generator is now accessible to all Bing Chat and Bing Image Creator enthusiasts. The release saw Bing Enterprise users as the first beneficiaries, followed by Bing Image Creator users. But now, the gates have opened for everyone.

Bing is gaining traction with DALL-E 3 even before OpenAI integrates it into its platform, ChatGPT — a transition scheduled for later this month but initially restricted to premium users. Given this edge, Microsoft might dominate the image generation arena for some time.

As the name suggests, DALL-E 3 is OpenAI’s third take on its controversial AI image generation tool. The enhancement in this version is pronounced, with the firm emphasizing its refined prompt comprehension. It’s geared to generate more imaginative and lifelike visuals. A notable change is the ease of use – with DALL-E 3 woven into Bing Chat and ChatGPT, users interact with a chatbot to mold their image, eliminating the need for constant prompt adjustments.

The precision and artistry of the Bing AI image generator

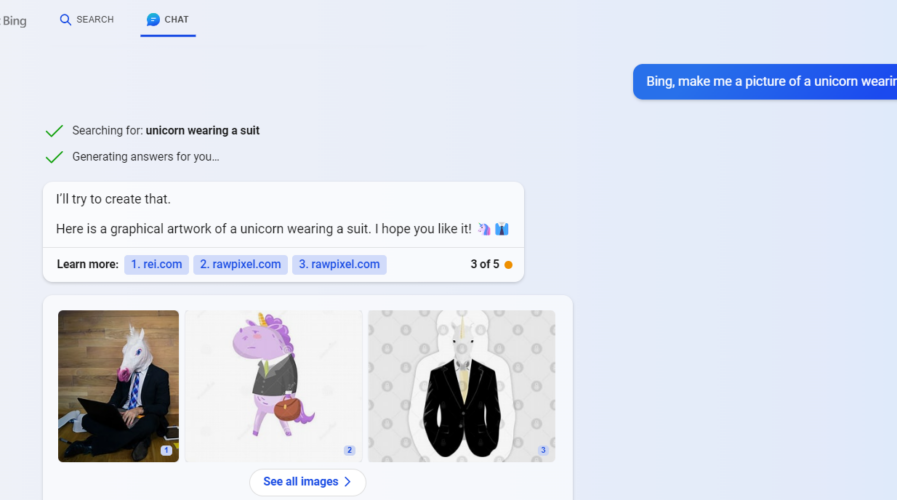

Curiosity piqued, we dove straight into Bing Image Creator to explore this innovation. Our first imaginative scenario was peculiar: “An astronaut landed on the Statue of Liberty made out of ice during a summer season.” Eagerly, we fed this into the system and awaited the AI’s visual rendition.

An astronaut landed on the Statue of Liberty made out of ice during a summer season. (Source – Bing Image Creator)

Upon receiving the image generated by DALL-E 3, we were taken aback by the precision and intricate detailing of the visuals. The juxtaposition of the astronaut with the icy Statue of Liberty against a summery backdrop captured our prompt’s essence. The model managed to bring out the abstract nature of our request and ensured that the components were contextually appropriate.

What was most striking though was the play on contrasts. The cold, icy tones of the Statue of Liberty beautifully contrasted with the warm hues of a summer setting, making it both visually captivating and a testament to the AI’s prowess.

Additionally, we appreciated the user-friendliness of the interface. The conversational approach with Bing Image Creator allowed us to effortlessly input our creative whims, making the process seamless and intuitive.

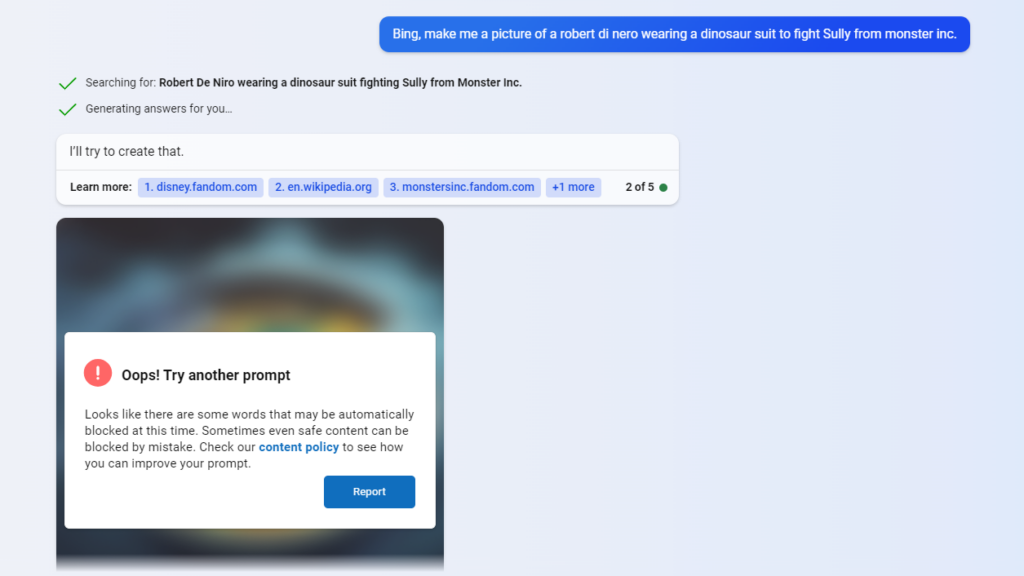

We were intrigued and keen to throw another curveball at DALL-E 3 after our initial experiment. So, we opted for a whimsical image prompt: “Robert De Niro wearing a dinosaur suit to fight Sully from Monsters Inc.”

However, when the result populated, it deviated from our expectations. Somewhat amusingly, we were presented with an image of Robert De Niro donning a Sully-inspired outfit, squaring off against a dinosaur. While it wasn’t quite what we envisioned, it gave us a chuckle. It’s worth noting that even though the AI didn’t hit the mark perfectly, the quality of the image and the character representations were top-notch.

Bing Image Creator also showed inaccuracies. (Source – Bing Image Creator)

This shows that while DALL-E 3’s capabilities are undoubtedly impressive, there can still be occasional hiccups in understanding or translating complex prompts. It’s a reminder that AI, no matter how advanced, retains an element of unpredictability. We’re wondering if this is simply an interpretative error or if the model is showcasing its unique sense of humor. Do AIs laugh at deep logic jokes?

It’s not perfect yet, though

Our experience with Bing Chat though threw us a curveball. The response was distinctively different when we input the identical prompt into this platform. We were met with a message stating, “Oops! Try another prompt. Looks like there are some words that may be automatically blocked at this time. Sometimes even safe content can be blocked by mistake. Check our content policy to see how you can improve your prompt.”

This discrepancy between Bing Chat and Bing Image Creator illuminates the intricacies of AI content moderation. It suggests that even within the same company’s suite of products, different platforms may employ different filters, safety checks, and interpretation mechanisms.

Bing Chat shows an error in prompting a request.

It seems that Bing Chat, being a conversational AI tool, might be programmed to be more conservative in its responses, to ensure user safety and prevent the propagation of potentially harmful content.

The AI in Bing Chat might analyze and interpret the input text differently than the AI in Bing Image Creator. One might be more text and context-sensitive, while the other is more visually driven.

Or it could be that, given the potential misuse of conversational AI tools, Bing Chat might have an additional layer of safety checks to prevent generating inappropriate or potentially harmful content.

Unveiling the safety measures

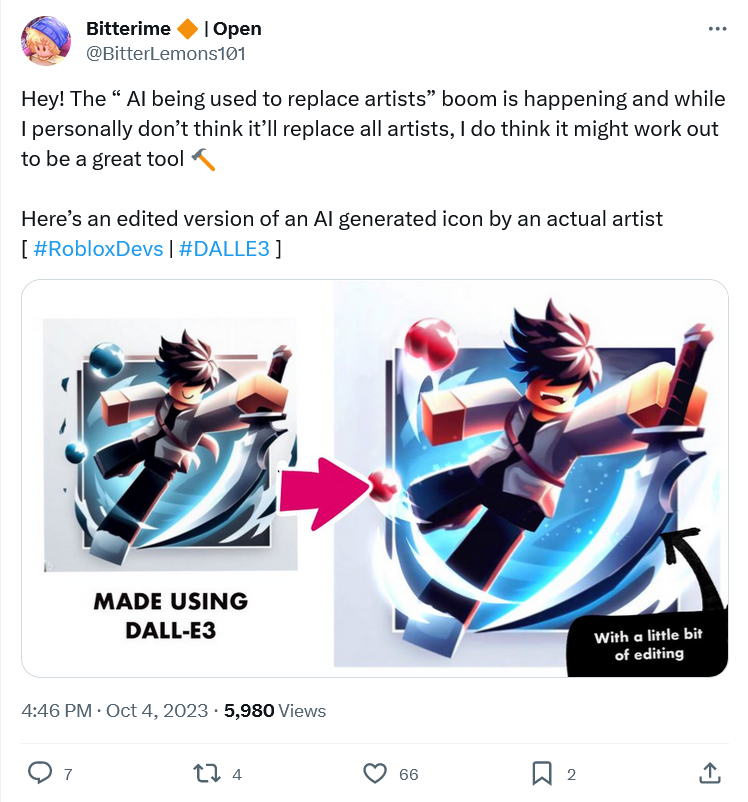

OpenAI has also incorporated advanced safety mechanisms into DALL-E 3. Specifically, it’s engineered to abstain from reproducing likenesses of public personas and is also designed to steer clear of generating offensive or NSFW imagery. Since Bing Image Creator’s inception, over a billion AI-generated images have emerged. Users have tapped into this resource for many purposes, including illustrations, digital wallpapers, and social media content.

The assimilation of DALL-E 3 into the likes of Bing and ChatGPT is projected to augment its utility even further. Nevertheless, as the realism of the images produced by this AI image generator evolves, it brings to the fore ethical dilemmas surrounding deepfakes. Microsoft has fortified its platform with safety precautions to counteract these concerns, such as embedding digital watermarks on all images and establishing content moderation filters.

Every image generated by Bing Image Creator is embedded with a discreet digital watermark aligned with the C2PA standard. This watermark chronicles the image’s date and time of creation and attests to its AI origin. Complementing this, a rigorous content moderation framework curtails the generation of harmful or unsuitable images. This system aligns with Bing’s operating principles and community guidelines, filtering out any visuals that depict nudity, aggression, discriminatory speech, or illicit activities.

But Microsoft’s ambitions for DALL-E’s tech stretch beyond just Bing. Plans are in motion to infuse this technology into other domains, such as the envisaged AI image creation feature named ‘Paint Cocreator’ for the iconic Paint application, potentially ushering DALL-E’s prowess straight into the Windows environment.

We’ll undoubtedly experiment more with DALL-E 3, as its results – spot-on or off-kilter – never fail to entertain. It’s a fascinating glimpse into the evolving world of AI, where precision and whimsy coexist.

READ MORE

- Safer Automation: How Sophic and Firmus Succeeded in Malaysia with MDEC’s Support

- Privilege granted, not gained: Intelligent authorization for enhanced infrastructure productivity

- Low-Code produces the Proof-of-Possibilities

- New Wearables Enable Staff to Work Faster and Safer

- Experts weigh in on Oracle’s departure from adland