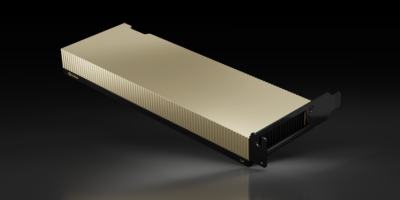

Adobe launches beta of first Firefly model focused on commercial use (Source – Adobe)

Adobe Firefly uses generative AI to create images

- Adobe launches the beta version of its first Firefly model, focused on commercial use, which will be directly integrated into Creative Cloud, Document Cloud, Experience Cloud, and Adobe Express workflows.

- Adobe will introduce a “Do Not Train” tag for creators who do not want their content used in model training; the tag will remain associated with content wherever it is used, published, or stored.

- Adobe plans to enable customers to extend Firefly training with their own creative collateral, generating content in their own style or brand language.

Generative AI continues to revolutionize industries, especially as more use cases are developed. What began as merely an enhanced search tool can now write essays, compose musical scores, and even provide suggestions on where to find the best snack for the day. It’s no surprise that this technology would eventually find its way into images.

This is exactly what the latest release of ChatGPT is all about. Besides generating text similar to human speech, ChatGPT-4 can also generate images and computer code from almost any prompt, thanks to generative AI. Technology companies are exploring ways to implement generative AI into their products and services, given this latest innovation.

When it comes to images, one of the biggest challenges users typically face, especially when searching for images, is copyright issues. While copyright-free images are available, users often can’t find their desired image.

This is where companies like Adobe hope to deploy generative AI solutions. Adobe Firefly is a new family of creative generative AI models focused on generating images and text effects. This new solution is expected to bring even more precision, power, speed, and ease directly into Creative Cloud, Document Cloud, Experience Cloud, and Adobe Express workflows where content is created and modified. Adobe Firefly will be part of a series of new Adobe Sensei generative AI services across Adobe’s clouds.

Known for its AI innovation, Adobe has already delivered hundreds of intelligent capabilities through Adobe Sensei into applications that hundreds of millions of people rely on. Notable features such as Neural Filters in Photoshop, Content-Aware Fill in After Effects, Attribution AI in Adobe Experience Platform, and Liquid Mode in Acrobat empower Adobe customers to create, edit, measure, optimize, and review billions of pieces of content with power, precision, speed, and ease. These innovations are developed and deployed in alignment with Adobe’s AI ethics principles of accountability, responsibility, and transparency.

According to David Wadhwani, president of Digital Media Business at Adobe, generative AI is the next evolution of AI-driven creativity and productivity, transforming the conversation between creator and computer into something more natural, intuitive, and powerful.

“With Firefly, Adobe will bring generative AI-powered ‘creative ingredients’ directly into customers’ workflows, increasing productivity and creative expression for all creators, from high-end creative professionals to the long tail of the creator economy,” added Wadhwani.

Adobe Firefly: Generative AI for Creators

Generative AI aims to simplify work for employees and enhance their capabilities. With this in mind, Adobe has designed Firefly to enable creators to work at the speed of their imaginations. In essence, Adobe Firefly will allow anyone to use their own words to generate content the way they envision it, regardless of their skills. This includes images, audio, vectors, videos, and 3D, as well as creative ingredients like brushes, color gradients, and video transformations.

First Adobe Firefly model will empower customers of all experience levels to generate high-quality images and stunning text effects. (Source – Adobe)

With Adobe Firefly, producing limitless variations of content and making changes, again and again — all on-brand — will be quick and simple. Adobe will also integrate Firefly directly into its industry-leading tools and services, so users can effortlessly leverage the power of generative AI within their existing workflows.

Launched as a beta, the company will engage with the creative community and customers as it evolves this transformational technology and begins integrating it into its applications. The first applications that will benefit from Adobe Firefly integration will be Adobe Express, Adobe Experience Manager, Adobe Photoshop, and Adobe Illustrator.

Regarding concerns about copyright, Adobe stated that the images generated are safe for commercial use. Adobe’s first model, trained on Adobe Stock images, openly licensed content, and public domain content where the copyright has expired, will focus on images and text effects and is designed to generate content safe for commercial use.

Adobe Stock’s hundreds of millions of professional-grade, licensed images are among the highest quality in the market and help ensure Adobe Firefly won’t generate content based on other people’s or brands’ IP. Future Adobe Firefly models will leverage a variety of assets, technology, and training data from Adobe and others. As other models are implemented, Adobe will continue to prioritize countering potential harmful bias.

At the same time, Adobe intends to build generative AI in a way that enables customers to monetize their talents, much like Adobe has done with Adobe Stock and Behance. Adobe is developing a compensation model for Adobe Stock contributors and will share details once Adobe Firefly is out of beta. Adobe is also planning to make Adobe Firefly available via APIs on various platforms to enable customers to integrate it into custom workflows and automation.

READ MORE

- Building trust in the data economy: Enerlyf and Affinidi redefine CX, privacy and energy efficiency

- The threat of fraud networks in the APAC: KYC and beyond

- Next-gen CX is based on customer communication management systems.

- Enhancing Business Agility with SASE: Insights for CIOs in APAC

- 3 Steps to Successfully Automate Copilot for Microsoft 365 Implementation