There’s a growing call for “AI pause” amidst a rise in chatbots. Why the sudden pushback?Source: Shutterstock

There’s a growing call for “AI pause” amidst the rise in chatbots. Why the sudden pushback?

- In recent weeks, a slew of AI chatbots have been launched for the general public, leading to growing concerns among technology experts.

- Elon Musk and other industry executives are calling for a six-month pause in developing systems more powerful than OpenAI’s newly launched GPT-4.

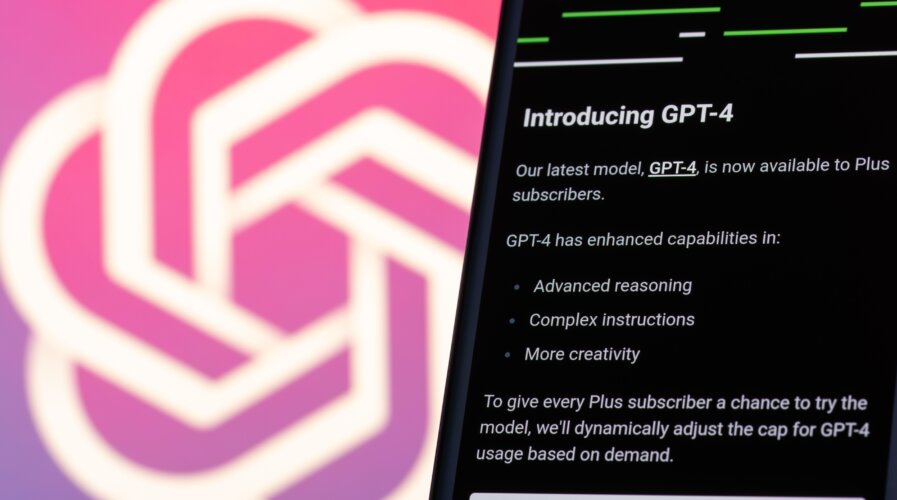

This year is definitely the year of generative artificial intelligence (AI), and new developments, including AI chatbots, are growing at a breakneck pace. However, not everyone—including industry executives like Elon Musk and other AI experts—is happy with the progress. They are calling for a six-month pause in developing systems more powerful than OpenAI’s newly launched GPT-4.

The fourth iteration of Microsoft-backed OpenAI’s GPT (Generative Pre-trained Transformer) program was launched earlier this month. AI researchers refer to GPT-4 as a neural network, a mathematical system that learns skills by analyzing data. Simply put, a neural network is the same technology that digital assistants like Siri and Alexa use to recognize spoken commands and that self-driving cars use to identify pedestrians.

“Powerful AI systems should be developed only once we are confident that their effects will be positive and their risks will be manageable,” the letter issued by the Future of Life Institute stated. According to the European Union’s transparency register, the non-profit is primarily funded by the Musk Foundation, the London-based group Founders Pledge, and the Silicon Valley Community Foundation.

📢 We're calling on AI labs to temporarily pause training powerful models!

Join FLI's call alongside Yoshua Bengio, @stevewoz, @harari_yuval, @elonmusk, @GaryMarcus & over a 1000 others who've signed: https://t.co/3rJBjDXapc

A short 🧵on why we're calling for this – (1/8)

— Future of Life Institute (@FLIxrisk) March 29, 2023

The letter is timely since ChatGPT and other AI chatbots have been attracting US lawmakers’ attention, with questions about their impact on national security and education. Even the EU police force Europol warned earlier this week about the potential misuse of the system in phishing attempts, disinformation, and cybercrime.

At the same time, the UK government has unveiled proposals for an “adaptable” regulatory framework around AI. “AI stresses me out,” Musk said earlier this month. He is one of the co-founders of industry leader OpenAI, and his carmaker Tesla uses AI for its autopilot system, making signing the letter controversial. Musk also believes it is right to seek a regulatory authority to ensure that the development of AI serves the public interest.

At the time of writing, 1,125 technology leaders and researchers have urged AI labs to pause the development of the most advanced systems, warning that AI tools present “profound risks to society and humanity.” Other signatories include:

- Steve Wozniak, a co-founder of Apple.

- Stability AI chief executive Emad Mostaque.

- Researchers at Alphabet-owned DeepMind.

- AI heavyweights Yoshua Bengio, often referred to as one of the “godfathers of AI,” and Stuart Russell, a research pioneer in the field.

The letter also contends that developers of AI, including those creating chatbots, are “locked in an out-of-control race to develop and deploy ever more powerful digital minds that no one — not even their creators — can understand, predict, or reliably control.” Since OpenAI released ChatGPT, there has been a push to develop more powerful AI chatbots that eventually led to a race that could determine the industry’s next leaders.

So far, significant AI chatbots announced by Big Tech alone include Microsoft’s Bing and Google’s Bard, followed by a series of other smaller tech companies. Most AI chatbots unveiled can perform human-like conversations, create essays on various topics, and perform more complex tasks, like writing computer code.

These tools have unfortunately been criticized for getting details wrong, with tendencies to spread misinformation. Therefore, the open letter calls for a pause in developing AI systems more powerful than GPT-4. The letter said that the pause is so “shared safety protocols” for AI systems can be formed. “If such a pause cannot be enacted quickly, governments should step in and institute a moratorium,” it added.

READ MORE

- Strategies for Democratizing GenAI

- The criticality of endpoint management in cybersecurity and operations

- Ethical AI: The renewed importance of safeguarding data and customer privacy in Generative AI applications

- How Japan balances AI-driven opportunities with cybersecurity needs

- Deploying SASE: Benchmarking your approach