Source – Shutterstock

The evolution of facial animation and facial motion capture

- Game developers are pushing the envelope to create more realistic experiences as technology advances and more images can be produced in real-time

- Facial scanning is one of the major technological advancements in the field of facial animation and facial motion capture

Today’s players desire more character control, and the lead character must appear as real as feasible. This means a character’s facial animation in a game, for instance, must showcase incredibly lifelike expressions and emotions. By developing more sympathy for the character, gamers then get more invested in the game.

Needless to say, gamers naturally want their characters’ surroundings to be as interactive as possible.

The studios are aware of this and want to see the characters given life. Because of this, there is now a need for more animators to produce an enormous variety of animations for any circumstance. The use of subtle character qualities found in animated films and close-up face animation by the studios is increasing in video games.

Game developers are pushing the envelope to create more realistic experiences as technology advances and more images can be produced in real-time. To keep users connecting with the character, a realistic amount of facial motion is required if the game is focusing on realistic aesthetics and body mechanics.

In fact, Virtuos recently held a webinar with experts on “The Evolution of Facial Animation and Facial Motion Capture,” including Kyle Renteria, Creative Director at CounterPunch, and Alex Bittner, Senior Artist/Animator at NetherRealm Studios, to talk about how advancements in facial animation and motion capture have changed how video games are developed today.

Game changers in facial animation

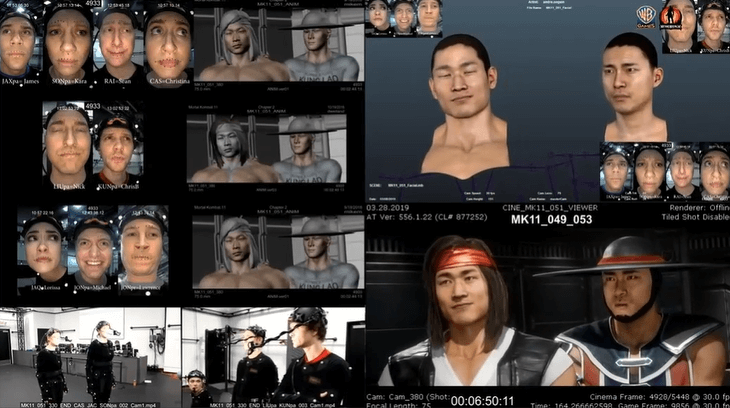

Bittner and Renteria both concurred that one of the greatest game changers recently is facial scanning. Facial scanning is the use of facial scan data to produce blendshapes driven by facial action units and be able to use these in engines.

Put it simply, this is where a model or actor will usually come in and sit on a chair while being completely around by cameras. Their face and head will be scanned in three dimensions by the cameras, creating a 3D model that the game and animation designers can use.

According to Bittner, using a model to act out the desired facial expressions facilitates the creation of precise face shapes and muscle movements.

“Mortal Kombat 11 was the first Mortal Kombat game where we’ve used the facial scanning,” said Bittner. “Johnny Cage, a character from the game, is one where we actually use a real person’s face, that has been tweaked and modelled a little bit to become our character, and make it higher quality, and more realistic version.”

Renteria added that Facial Action Coding System (FACS), is another key technological development in the field. FACS is a comprehensive system for describing all visually perceptible face motions. It is physically based. It divides face emotions into discrete muscle movement parts known as Action Units (AUs).

Actors playing their roles to be motion captured.

“Before (FACS), when it was just joints, you could only get so far with the expressions. But building it off how the muscles work, it really improved how much more emotion, expression, and different feelings you could get, and it feels more real to the audience,” Renteria shared.

Motion capture transforming games

Bittner claims that motion capture is an amazing tool that has allowed creators to significantly boost not only the speed and quantity of facial animation that they produce, but also the quality and detail.

Renteria added on Alex’s point by mentioning motion capture, which enables animators to “trace” the video data of an actor’s performance and convert the point data into the postures of the digital character, significantly speeding up production.

“The (motion tracking) dots also help us with consistency, improve accuracy, and help us to get closer to the end result that we’re trying to obtain, especially in large quantities of work,” Renteria added.

The future of animation – at least in the next five years

The animation industry is evolving quickly, and this change won’t stop anytime soon. According to Bittner and Renteria, the gaming business will alter as a result of four major factors:

- Bigger, longer games with more content

- Technology will encourage the arts and enable games to demonstrate more inventive methods of engaging the player. Better animation and more relatable stories.

- More realism in terms of quality.

- More detail and audience engagement.

Bittner has observed that PCs and consoles are gradually increasing their capabilities for disc removal, and use cloud storage, to run games with no size restriction.

“Downloadable content expansions, for instance, expand the size of games since they require a lot more content and things to fill them. That allows these characters more opportunities for stories, more room for development, and more time than they previously had to do all the little moments and events that make up a story,” Bittner concluded.

READ MORE

- 3 Steps to Successfully Automate Copilot for Microsoft 365 Implementation

- Trustworthy AI – the Promise of Enterprise-Friendly Generative Machine Learning with Dell and NVIDIA

- Strategies for Democratizing GenAI

- The criticality of endpoint management in cybersecurity and operations

- Ethical AI: The renewed importance of safeguarding data and customer privacy in Generative AI applications